Welcome to BomWatch.com.au – a site dedicated to examining Australia’s Bureau of Meteorology, climate science and the climate of Australia. The site presents a straight-down-the-line understanding of climate (and sea level) data and objective and dispassionate analysis of claims and counter-claims about trend and change.

Dr. Bill Johnston is a former senior research scientist with the NSW Department of Natural Resources (abolished in April 2007); which in previous iterations included the Soil Conservation Service of NSW; the NSW Water Conservation and Irrigation Commission; NSW Department of Planning and Department of Lands. Like other NSW natural resource agencies that conducted research as a core activity including NSW Agriculture and the National Parks and Wildlife Service, research services were mostly disbanded or dispersed to the university sector from about 2005.

Daily weather observations undertaken by staff at the Soil Conservation Service’s six research centres at Wagga Wagga, Cowra, Wellington, Scone, Gunnedah and Inverell were reported to the Bureau of Meteorology. Bill’s main fields of interest have been agronomy, soil science, hydrology (catchment processes) and descriptive climatology and he has maintained a keen interest in the history of weather stations and climate data. Bill gained a Bachelor of Science in Agriculture from the University of New England in 1971, Master of Science from Macquarie University in 1985 and Doctor of Philosophy from the University of Western Sydney in 2002 and he is a member of the Australian Meteorological and Oceanographic Society (AMOS).

Bill receives no grants or financial support or incentives from any source.

How BomWatch operates

BomWatch is not intended to be a blog per se, but rather a repository for analyses and downloadable reports relating to specific datasets or issues, which will be posted irregularly so they are available in the public domain and can be referenced to the site. Issues of clarification, suggestions or additional insights will be welcome.

The areas of greatest concern are:

- Questions about data quality and data homogenisation (is data fit for purpose?)

- Issues related to metadata (is metadata accurate?)

- Whether stories about datasets consistent and justified (are previous claims and analyses replicable?)

Some basic principles

Much is said about the so-called scientific method of acquiring knowledge by experimentation, deduction and testing hypothesis using empirical data. According to Wikipedia the scientific method involves careful observation, rigorous scepticism about what is observed … formulating hypothesis … testing and refinement etc. (see https://en.wikipedia.org/wiki/Scientific_method).

The problem for climate scientists is that data were not collected at the outset for measuring trends and changes, but rather to satisfy other needs and interests of the time. For instance, temperature, rainfall and relative humidity were initially observed to describe and classify local weather. The state of the tide was important for avoiding in-port hazards and risks and for navigation – ships would leave port on a falling tide for example. Surface air-pressure forecasted wind strength and direction and warned of atmospheric disturbances; while at airports, temperature and relative humidity critically affected aircraft performance on takeoff and landing.

Commencing in the early 1990s the ‘experiment’, which aimed to detect trends and changes in the climate, has been bolted-on to datasets that may not be fit for purpose. Further, many scientists have no first-hand experience of how data were observed and other nuances that might affect their interpretation. Also since about 2015, various data arrive every 10 or 30 minutes on spreadsheets, to newsrooms and television feeds largely without human intervention – there is no backup paper record and no way to certify those numbers accurately portray what is going-on.

For historic datasets, present-day climate scientists had no input into the design of the experiment from which their data are drawn and in most cases information about the state of the instruments and conditions that affected observations are obscure.

Finally, climate time-series represent a special class of data for which usual statistical routines may not be valid. For instance, if data are not free of effects such as site and instrument changes, naïvely determined trend might be spuriously attributed to the climate when in fact it results from inadequate control of the data-generating process: the site may have deteriorated for example or ‘trend’ may be due to construction of a road or building nearby. It is a significant problem that site-change impacts are confounded with the variable of interest (i.e. there are potentially two signals, one overlaid on the other).

What is an investigation and what constitutes proof?

The objective approach to investigating a problem is to challenge the straw-horse argument that there is NO change, NO link between variables, NO trend; everything is the same. In other words, test the hypothesis that data consist of random numbers or as is the case in a court of law, the person in the dock is unrelated to the crime. The task of an investigator is to open-handedly test that case. Statistically called a NULL hypothesis, the question is evaluated using probability theory, essentially: what is the probability that the NULL hypothesis is true?

In law a person is innocent until proven guilty and a jury holding a majority view of the available evidence decides ‘proof’. However, as evidence may be incomplete, contaminated or contested the person is not necessarily totally innocent –he or she is simply not guilty.

In a similar vein, statistical proof is based on the probability that data don’t fit a mathematical construct that would be the case if the NULL hypothesis were true. As a rule-of-thumb if there is less than (<) a 5% probability (stated as P < 0.05) that that a NULL hypothesis is supported, it is rejected in favour of the alternative. Where the NULL is rejected the alternative is referred to as significant. Thus in most cases ‘significant’ refers to a low P level. For example, if the test for zero-slope finds P is less than 0.05, the NULL is rejected at that probability level, and trend is ‘significant’. In contrast if P >0.05, trend is not different to zero-trend; inferring there is less than 1 in 20 chance that trend (which measures the association between variables) is not due to chance.

Combined with an independent investigative approach BomWatch relies on statistical inference to draw conclusions about data. Thus the concepts briefly outlined above are an important part of the overall theme.

Get the latest articles from BOMWatch

We’ll Update you when new articles are published

Very interesting Bill, I’m still learning. But then, I was trained to fix my gaze on what was underfoot, not what was up in the sky. Will you be posting notifications when new material is added to the site or will you leave that to us to check from time to time? Keep up the good work.

Dr Johnston, please point me to the topic of the BOM allegedly altering historical temperature records to exaggerate warming climate. Is this true?

Sincerely

Gunther Jank

Perth

Hello Gunther, Sorry I missed you comment.

Homogenisation is the process used by the Bureau to adjust for the effect of site and instrument changes (and joining datasets together) on so-called raw data. Regardless of method, of which there are several, incorrect detection of changepoints, for instance due to faulty metadata (data about the data) or incorrect adjustments result in biased trends in homogenised data.

At all the sites we have thus-far analysed, adjustments have been made haphazardly (changepoints have been miss-specified) or the adjustments themselves do not reflect the actual change in the data. It is also concerning that three rounds of homogenisation of the same data apply different adjustments, some at different times, which of-course results in different trends in homogenised series.

Check-out Charleville for example.

Thank you for your interest,

Cheers,

Bill

You can make up your own mind

Data sourced from the BOM

http://www.waclimate.net/acorn2/

Thanks very much for that link Jeff. It certainly makes for fascinating reading. Maybe it should go into the category of alarming reading. I recommend that visitors to this site also click through to the link you have provided. But I also ask visitors who click through from this site to make sure they click back to this site to read further detail about Dr Bill Johnston’s research. It makes for plenty more ‘alarming reading’

Günther, I’d heard a scientist speaking about modelling for ‘climate change’ point out that the alarmist modelling encompasses only several years of data when 30-40 years of data should be used as thats the general period accepted as the climate cycle if you will. It is fascinating all the same and as with all science we should be open to new data and change our mindset accordingly.

G’day Bill ,, just found out about your endeavors after chatting to Patricia and Michael (neighbours) . So refreshing to find others with similar views regarding the climate hysteria . Have been keenly following the debate on various sites (Australian Climate Skeptics , etc) , and if we can push on with exposing the lies to others , we may eventually convince more people of the fools they have been made . Cheers , Mal .

Thanks Mal,

Cheers,

Bill

Very interesting article.Lots of work in there Bill.!No doubt you would spread these works widely?.Well done.Regards John

Thanks for your comment John. Yes, there has been an enormous amount of work put into this by Bill. In some ways I think it is almost the focus of his life’s work. He has a real commitment to it and a sense of urgency about it.

From one perspective it is also a hard message to sell because our approach on this site has not been the typical ‘journalese’ style of click bait, ten-second-sound-bites, and manipulative use of emotion. We strive to present verifiable facts and the Papers that appear here are based on those facts. The interesting thing is that many of the facts – such as raw temperature data – come from the BOM itself.

Many other facts – such as site changes at weather stations and step changes in the temperature records – are things that the BOM should know but doesn’t. The poor state of the Metadata history at weather stations cited on this website is a clear case of this.

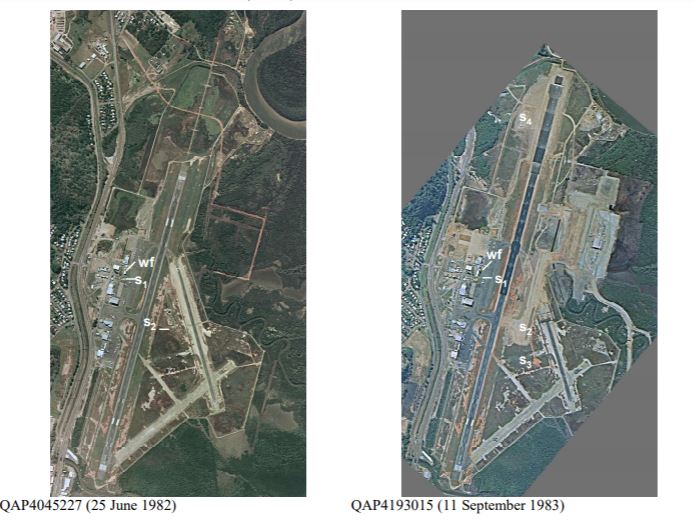

We have used credible sources – such as RAAF air photography and various departments of works documents – to prove our assertion that, in many cases, the BOM simply lacks detailed knowledge of its own Metadata. I hope that visitors to this site will take the time to carefully read Bill’s papers and come to agreement with us on this. I hope that officials from the BOM will do the same.

With regard to your comment that we should spread the information widely, we certainly aim to do this and any word-of-mouth awareness you can generate would be most appreciated.

David Mason-Jones, Publisher

Thanks David.Hard to believe how much time and energy has been spent by supposed scientific bodies in promoting the fallacy of”global warming” and then”climate change”.No doubt sometime in the future the World must wake up to the nonsense, mainly because of work from Bill and so many others..Keep up the fine work.Regards and thank you for reply.John.

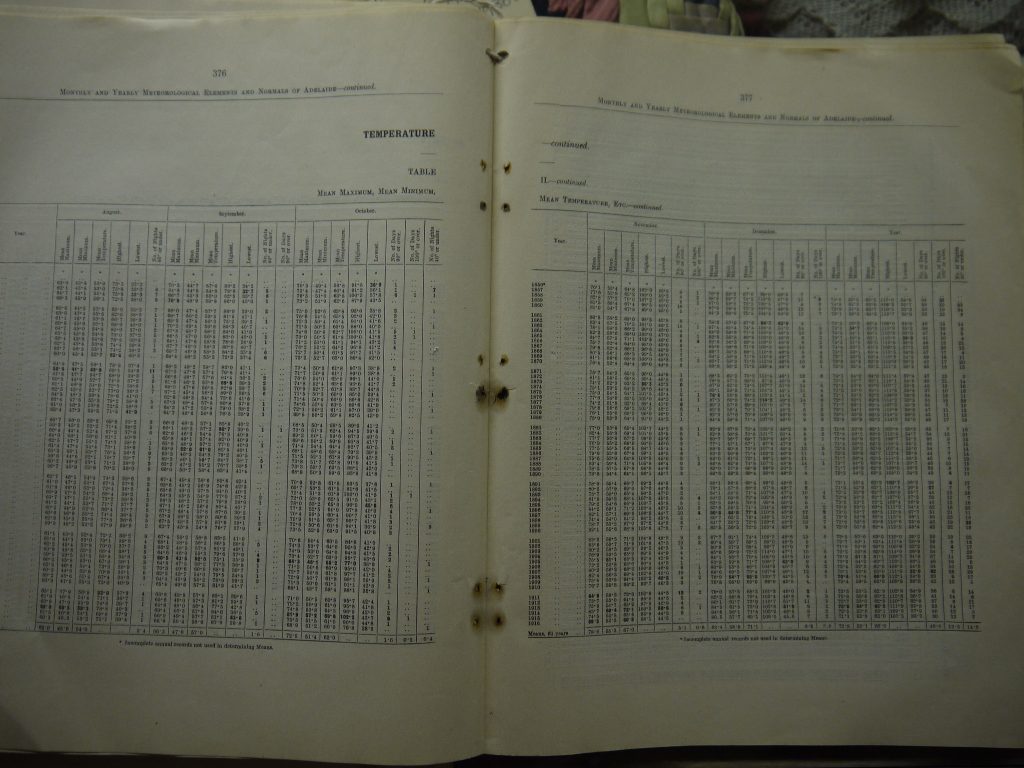

Love it that is why when i was packing up Mum’s belongings after her death and I came across a commonwealth year book for 1966, amazing little encyclopaedia of data of what was happening in Australia, with everything from births to unemployed to little maps of Australia showing rain, temp, wind frost etc for Jan and July of 65 I chose to keep it, because as they are trying to get rid of all books, then computer data can be changed and no one knows. with a book there is no chance of it being changed, unless they burn the book.

I spent considerable time examining the bom data in an effort to find rational reasons as to why the BOM were altering data in the way they were.

I can after examining the raw data from all of our long term high quality stations categorically say with out any doubt the BOM is deliberately creating warming where none exists.

I created a FB group several years back to collate this data its available at https://www.facebook.com/groups/1518817361673585

The most common clunker I tent to see is linear time trends fitted to data sets that have clearly apparent oscillation patters in adddition to the short term ‘noise’. The sorst I saw of this was a data set of sea level in Sydney Harbour where the mean sea level clearly oscillated with the El Nino-La Nina cycle and there was an uptrend in the peaks and troughs.

The authors of the paper however did a linear trend fit which had a much steeper slope. It was plain as day that what they had done was crunched the numbers on a data set that started at a ‘trough’ and finished at a ‘peak’. A data set conforming to a pure sine wave would have generated a similarly dramatic uptrend with the same start-end features.

So how the heck do (apparently) senior scientists make such pre-HSC level blunders?

Surely not deliberately? Is the word ‘dramatic’ the key indicator here? Get the media all a fluster with a trick they are unlikely to pick or would happily ignore anyway.

I am an engineer by profession so tend to pay close attention to the numbers and quality od input data etc.

If you read about Sydney harbour , you may be also interested in this piece from SA I found some months ago on land subsidence at the Port of Adelaide by A Belperio where he goes to great lengths and spends much time making the argument that Pt Adelaide is sinking due to buildings, mangrove clearing, ground water pumping and not, as the IPCC says, rising sea levels as they use Pt Adelaide tide gauge as one of their makers.

https://www.researchgate.net/publication/248955091_Land_subsidence_and_sea_level_rise_in_the_Port_Adelaide_estuary_Implications_for_monitoring_the_greenhouse_effect